The Story of Counting (Part 2): Rise of the Machines

Sixteenth Century counting tech looks a little different than today’s.

In last week’s SSCS Blog we looked at how human beings figured out how to count. We stopped at the creation of algebra: a way to count without seeing what had to be counted.

Offloading the Work to Machines

Problem was, civilization continued growing. Fast. More and larger trade centers made commerce logistically challenging. Necessities like grain storage, land measurement, and tracking worker time outgrew the limits of the human mind. Calculations became tediously repetitive, too. The result: accuracy left a lot to be desired.[1]

So, merchants, tradesmen, and other early retailers turned to machines for help. Not an abacus or Chinese counting rods[2], where people are still counting out of their own heads, with the help of a tool. No, we’re talking about delegating machines to perform the calculations, an effort to remove the human being from the equation.

Enter the Pascaline

1 Blaise Paschal, inventor of the Pascaline

The first two counting machines go hand in hand because they were both mechanical and invented just a generation apart during the Renaissance. A Frenchman named Blaise (not Pedro) Pascal (above) came up with the first one in the 1640’s and figured he should name it after himself: The Pascaline. It relied on mechanical gears to carry over digits to make addition and subtraction possible, but even though it looked complicated, its calculations proved limited and, for something purported to be automatic, relatively labor intensive.

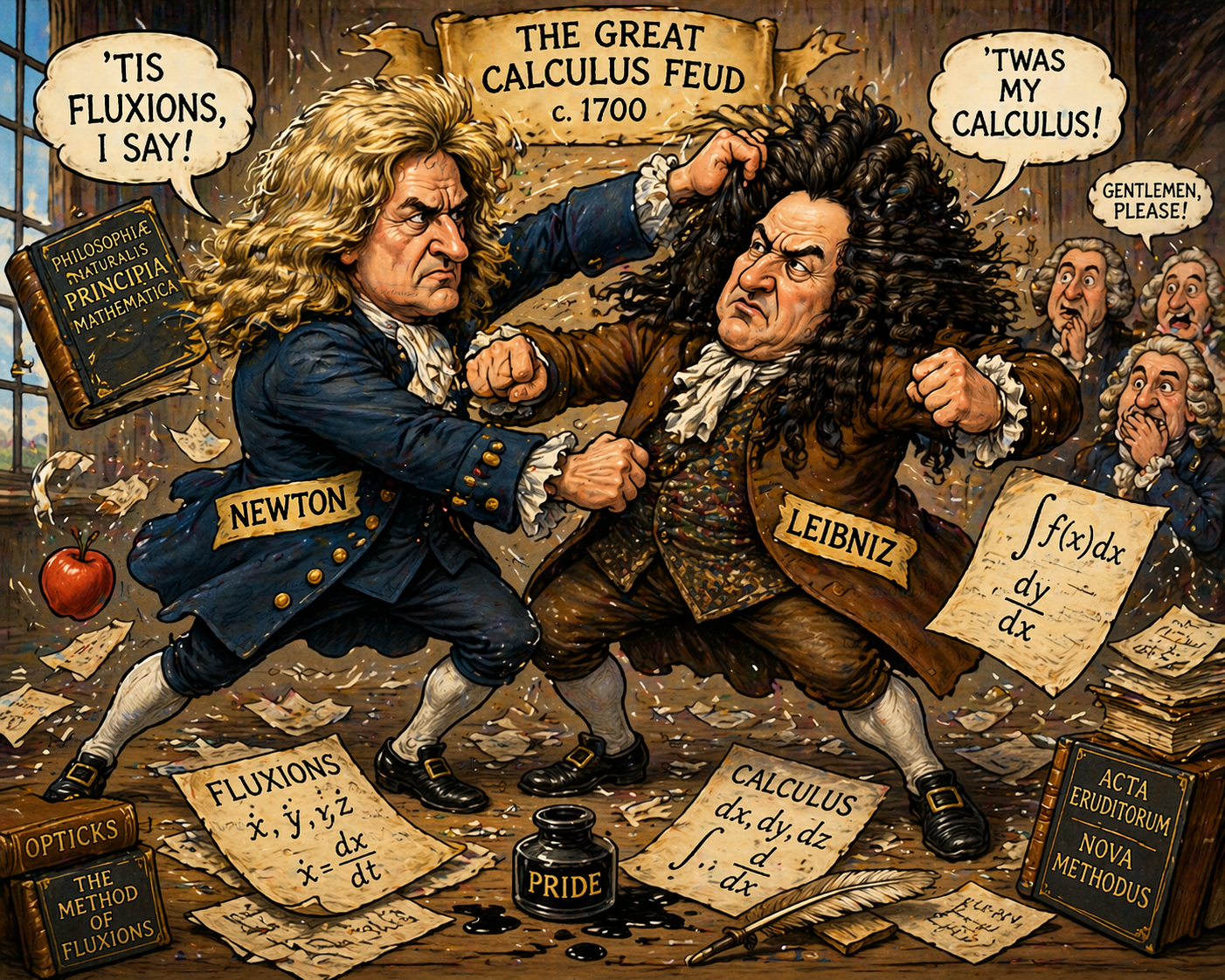

Newton Vs. Leibniz and the Stepped Reckoner

The second counting machine was invented by one of the most influential mathematicians of all time, even though he’s not a household name: Gottfried Leibniz. For his whole life he was in this big feud with another more famous mathematician, the guy that discovered gravity[3], Sir Isaac Newton.

Why were they fighting? They had two competing mathematical systems, and they were trying to convince the European scientific community to adopt one as a standard. Leibniz won out, which you can tell because he named his system, calculus.

As for that counting machine Leibniz invented, it didn’t have the staying power of his math system, but it was an improvement: it introduced a drum mechanism that let the machine perform multiplication and division automatically.[4] It had a way cooler name, too: The Stepped Reckoner.

From Calculating to Computing

These early machines, however, weren’t very practical. They calculated, as far as it went, but still required almost constant attention and guidance from a human to work. Even in these pre-digital times, people were looking for something that could execute a whole string of instructions—a routine—sometimes more than one at a time, without need for intervention. Such an automatic process began to be referred to as computing [5].

If you are a Boomer that was a computer major in the Mid-1970s, you probably have not-so-fond memories of waiting in long lines to the computer lab late at night, waiting for access to the (only) school mainframe, so you could do your homework on punch cards.

Little did you know the technology you were using was almost two hundred years old, invented not by a mathematician, but a weaver, another Frenchman who named his invention after himself, the Jacquard loom. He used punch cards to send signals to the loom that translated into specific weaving patterns. A truly programmable solution.

2 Punch cards used in a Jacquard loom. Image by Dragfyre (2011), via Wikimedia Commons (CC BY-SA 3.0).

The First Computer Programmer

The first punch card system developed specifically for mathematical purposes came about 30 years later than the Jacquard Loom, when Charles Babbage built the Analytical Engine, more proof-of-concept than anything else since it never got finished.

It did, however, offer a significant new way to count. Although it also used gears, levers, and drums, within those limitations it got a lot of concepts right, like a central place for processing calculations (the “Mill”) and another place for information storage (the “Store”).[6]

Those conceptual breakthroughs probably wouldn’t have gotten much further, however, if it weren’t for the first computer programmer, Augusta Ada King, Countess of Lovelace.[7]

3 Portrait of Ada King, Countess of Lovelace (c. 1840), the first computer programmer. Attributed to Alfred Edward Chalon. Courtesy of the Science Museum Group, via Wikimedia Commons.

First of all, she wrote down a description of how the machine theoretically worked for others so it could be circulated, but she gets the designation of first programmer because she created a step-by-step set of instructions (using symbols as well as numbers) that a machine could follow to solve a problem automatically,[8] even if that machine didn’t exist yet. It wasn’t even 1850.

The question remains, then: how did we get from here to the Digital Age? It’s an interesting journey, as we’ll reveal next week in the third and final part of this series.

[1] Boyer, Carl B., and Uta C. Merzbach. A History of Mathematics. Wiley.

[2] See last week’s blog.

[3] Whether he really did get hit on the head with an apple is anyone’s guess.

[4] But then he hadn’t heard of AI.

[5] Compute, from Latin computāre (“to reckon, calculate”), is attested in English from the early 17th century (Oxford English Dictionary).

[6] Anyone interested in more details about how the thing almost worked can click the Analytical Engine link in the text above for a very basic explanation, courtesy of Stanford University.

[7] And Lord Byron’s daughter!

[8] Called an algorithm.

Leave A Comment