The Story of Counting (Part 3): The Quiet Revolution

Thanks to solid state technology, counting has become invisible, instantaneous, and universal, with unprecedented scope.

Welcome to the last part of the SSCS Blog’s trilogy on counting, a topic that sounds basic, but is, as c-store operators know, far from it. It’s best read after Part 1 and Part 2.

Old school cash registers represent the last generation of mechanical counting devices. Anyone who remembers them can probably guess that they couldn’t keep up with the counting speed and volume required for, actually, anything today.

Surprising, then, that the transition from mechanical counting systems to electrical ones really started back the 1880’s, born out of a specific need: the counting requirements of the U.S. Census.

Herman Hollerith and the International Business Machine

1 Hollerith Tabulating Machine, c. 1890. Photograph courtesy of the Computer History Museum, Mountain View, CA.

In 1880, the census had taken eight years to complete by hand. Even that wouldn’t be possible come 1890. So Buffalo New York inventor Herman Hollerith applied electricity to the concept of the punch card, and came up with an electromechanical tabulating machine. It translated punch card holes into one of two electrical signals. On/Off, On/Off, etc.

Punch cards were placed under a grid of pins. Each hole lined up with a pin. When a pin encountered a hole, it passed through, touching a pool of mercury. That completed an electrical circuit[1] which triggered a signal: “switched on.” No hole, no trigger, no signal: “switched off.” The position of the on/off signals created patterns in the punch cards. The machine translated the patterns into counts.

It was a huge success. The 1890 census took one year instead of eight. Results were available in one month, with a huge reduction in human error. Hollerith’s Tabulating Machine Company would become, in 1924, International Business Machines (IBM).

The Father of the Information Age

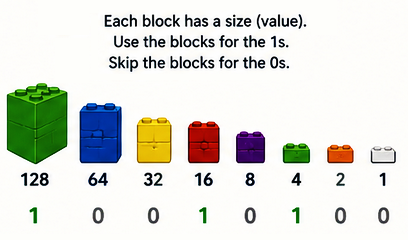

It took engineers another 30 full years to transform on/off pattern recognition to the world of numbers: on=1; off=0. Patterns could be combined strings of numbers, even just using those two values.

The way it worked was kind of like counting numbers using children’s plastic bricks, when each brick has a value (1, 2, 4, 8, 16, 32, 64, and so on), and the signal either includes that value in the count (1), or not (0), as illustrated at right:

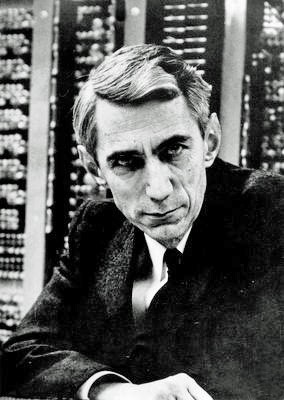

2 Claude Shannon (photo by Konrad Jacobs, December 2, 2015; licensed under CC BY‑SA 2.0 Germany)

This took some serious math skills to figure out, and an MIT mathematician, Claude Shannon, the “Father of the Information Age” [2] did exactly that. His theories and proofs of concept established the basics of digital electronics and computer architecture[3]. Everything that comes next proceeds right from it.

This is really when counting becomes indistinguishable from computerization, a quiet revolution in approach. With the basics in place, an incredible 100-year journey to constantly increase computing speed and power, while shrinking the space needed to provide it, began.

The Golden Age of Mainframe Juggernauts

The Ancient Greeks first imagined them, they became a demonstrated physical reality in the 1600s, and the first commercial one was available at the turn of the 20th Century.

So why did vacuum tubes take until the 1940s to be mass manufactured for computer systems? It’s because Instead of relying on pulled levers, punch cards, or pins to signal 1/0, they fired electrons. Electron paths were difficult to make consistent and repeatable. Once harness and controlled by engineers, though, they expanded computing exponentially.

The widespread use of vacuum tubes ushered in the First Age of mainframe computing, with historical mainframe juggernauts like Colossus (1943 – computerized codebreaking), ENIAC (1945 – first programmable computer) and UNIVAC (1951 – first commercial mainframe sold). These systems used tens of thousands of vacuum tubes.

A Computer in Your Pocket

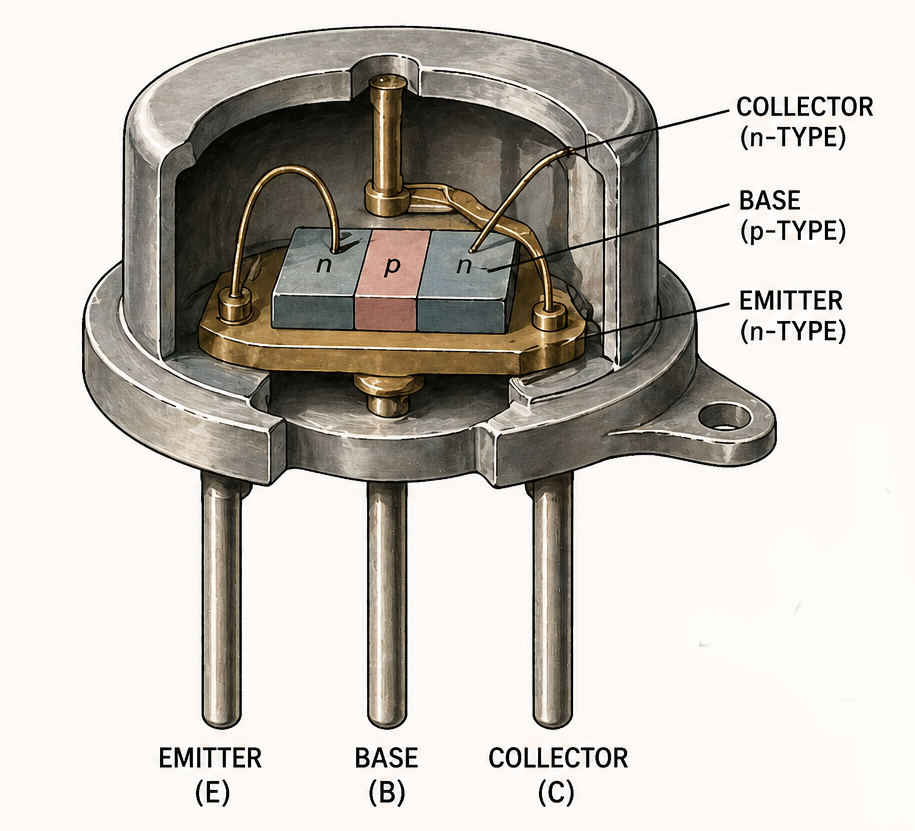

But still it wasn’t enough. Vacuum tubes were large, fragile, and power-hungry, far from ideal, which is why the last major breakthrough we’re covering today was about size as well as speed. It took place in 1947, when John Bardeen, Walter Brattain, and William Shockley at Bell Labs created the first transistor.[4]

3 Early “Junction” Transistor. William Shockley, “The Theory of-p-n Junctions in Semiconductors” Bell System Technical Journal 1949.

Instead of relying on heated vacuum tubes, the transistor used solid materials to control electrical signals—making it smaller, faster, and far more reliable for an incredibly vast amount of calculations in a short time. Solid state technology would evolve quicky: components shrunk and multiplied and were combined into microprocessors —entire computing engines on a single chip.

This made it possible to move computing out of specialized rooms and into everyday business. Personal computers brought that solid state power to the desktop, and, as SSCS customers know, mobile devices put it in the palm of your hand.

It’s a pretty incredible transformation when you think about it; what once required visible effort and specialized machines now happens quietly in the background. Computing is no longer something you see, it simply happens: invisible, instantaneous, and universal. At SSCS, we’re glad to be able to bring you a set of tools that contributes to exactly that, one that continue to evolve as we move forward.

[1] Shannon, Claude E. “A Mathematical Theory of Communication.” Bell System Technical Journal, vol. 27, 1948, pp. 379–423, 623–656.

[2] Shannon, Claude E. “A Symbolic Analysis of Relay and Switching Circuits.” Master’s thesis, Massachusetts Institute of Technology, 1938.

[3] An electrical circuit is a complete path that lets electricity move from a source, through something, and back again.

[4] Bardeen, J., Brattain, W. H., & Shockley, W. (1948). The transistor, a semiconductor triode. Physical Review, 74(2), 230–231. https://doi.org/10.1103/PhysRev.74.230

Leave A Comment